Only applicable if y_true and y_pred are missing. Please see this custom training tutorial for more details. When used with tf$distribute$Strategy, outside of built-in training loops such as compile and fit, using AUTO or SUM_OVER_BATCH_SIZE will raise an error. For almost all cases this defaults to SUM_OVER_BATCH_SIZE. AUTO indicates that the reduction option will be determined by the usage context.

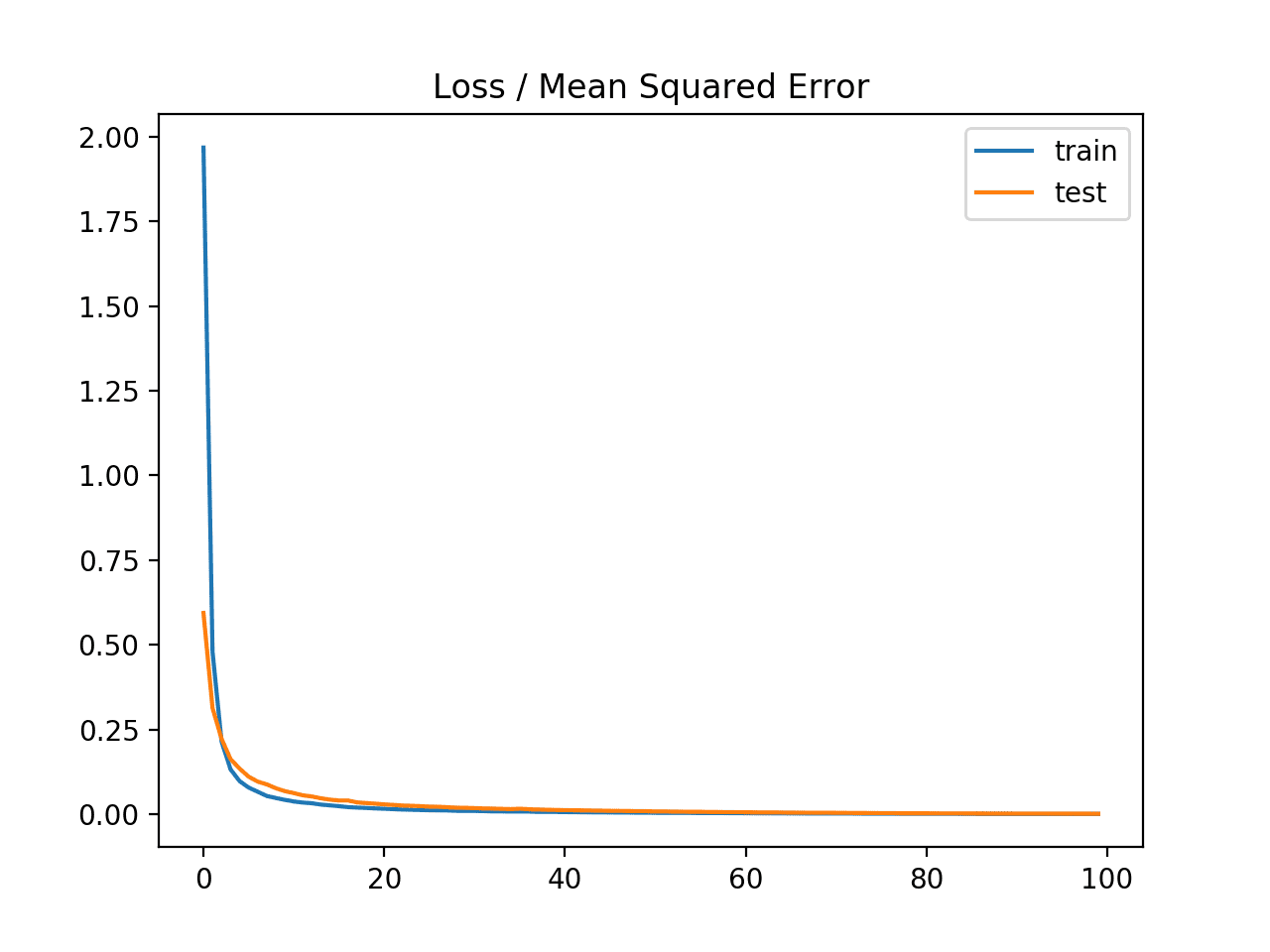

Type of keras$losses$Reduction to apply to loss. Defaults to -1 (the last axis).Īdditional arguments passed on to the Python callable (for forward and backwards compatibility). Axis is 1-based (e.g, first axis is axis=1). The axis along which to compute crossentropy (the features axis). For example, if 0.1, use 0.1 / num_classes for non-target labels and 0.9 + 0.1 / num_classes for target labels. By default we assume that y_pred encodes a probability distribution.įloat in. Whether y_pred is expected to be a logits tensor. , reduction = "auto", name = "squared_hinge" ) Arguments Arguments , reduction = "auto", name = "sparse_categorical_crossentropy" ) loss_squared_hinge( y_true, y_pred. , reduction = "auto", name = "poisson") loss_sparse_categorical_crossentropy( y_true, y_pred, from_logits = FALSE, axis = -1L. , reduction = "auto", name = "mean_squared_logarithmic_error" ) loss_poisson(y_true, y_pred. , reduction = "auto", name = "mean_squared_error" ) loss_mean_squared_logarithmic_error( y_true, y_pred. , reduction = "auto", name = "mean_absolute_percentage_error" ) loss_mean_squared_error( y_true, y_pred. , reduction = "auto", name = "mean_absolute_error" ) loss_mean_absolute_percentage_error( y_true, y_pred.

, reduction = "auto", name = "log_cosh") loss_mean_absolute_error( y_true, y_pred. , reduction = "auto", name = "kl_divergence" ) loss_logcosh(y_true, y_pred. , reduction = "auto", name = "kl_divergence" ) loss_kl_divergence( y_true, y_pred. , reduction = "auto", name = "huber_loss" ) loss_kullback_leibler_divergence( y_true, y_pred.

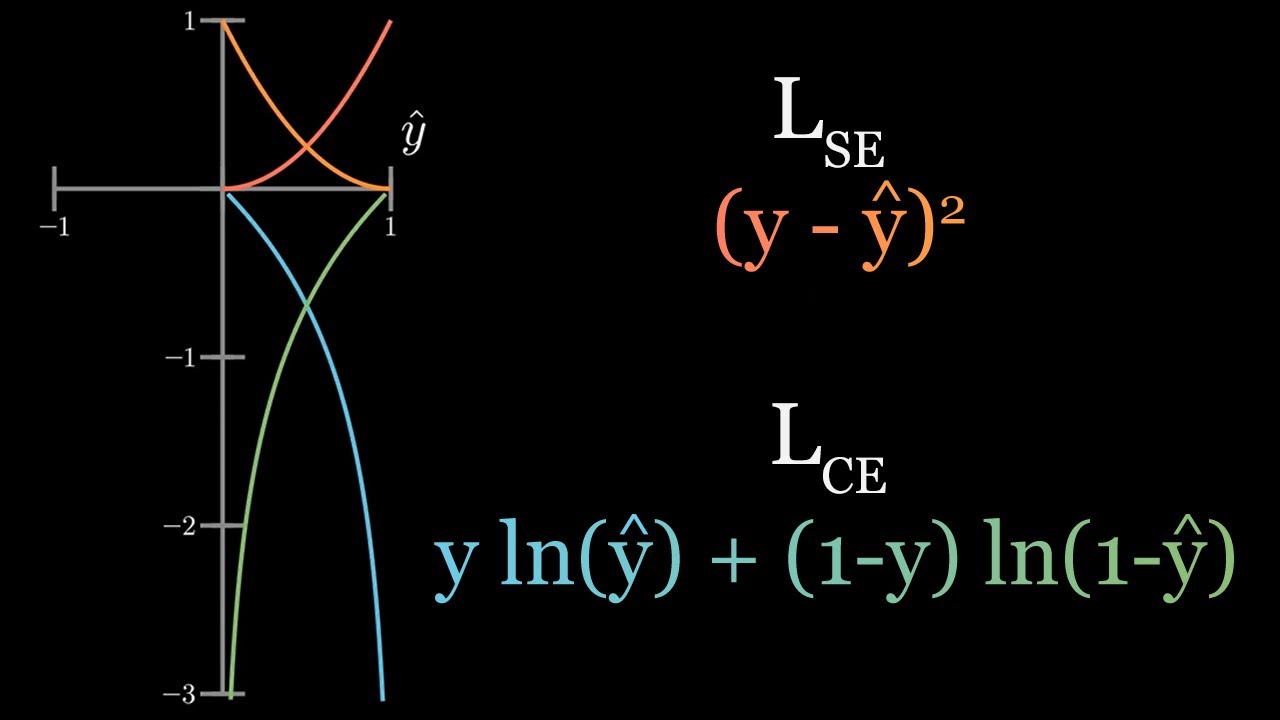

, reduction = "auto", name = "hinge") loss_huber( y_true, y_pred, delta = 1. , reduction = "auto", name = "cosine_similarity" ) loss_hinge(y_true, y_pred. , reduction = "auto", name = "categorical_hinge" ) loss_cosine_similarity( y_true, y_pred, axis = -1L. , reduction = "auto", name = "categorical_crossentropy" ) loss_categorical_hinge( y_true, y_pred. , reduction = "auto", name = "binary_crossentropy" ) loss_categorical_crossentropy( y_true, y_pred, from_logits = FALSE, label_smoothing = 0L, axis = -1L. Loss_binary_crossentropy( y_true, y_pred, from_logits = FALSE, label_smoothing = 0, axis = -1L.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed